Table of Contents

Tutorial

- Single HTML file (version September 2015)

- PDF file (version September 2015)

- Tests and examples - source code tarball (version September 2015)

Very short Tutorial

The FastFlow programming model

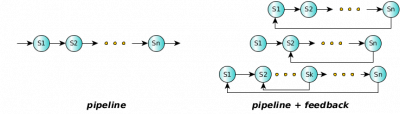

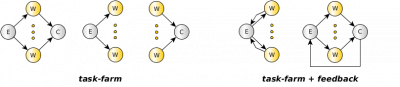

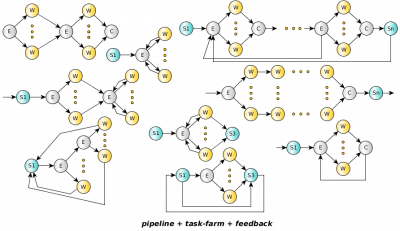

The FastFlow programming model is a structured parallel programming model. The framework provides several pre defined, general purpose, customizable and composable parallel patterns (or algorithmic skeletons). Any applications whose parallel structure may be modelled using the provided parallel patterns, used alone or in composition, may be implemented using FastFlow.

The basic

Pipeline

Pipelining is one of the simplest parallel pattern where data flows through a series of stages (or nodes) and each stage processes the input data in some way producing as output a modified version or new data. We will call the data flows streams of data or simply streams. A pipeline's stages can operate sequentially or in parallel and may have or not have an internal state.

/* this is a 3-stage pipeline example */ #include <iostream> #include <ff/pipeline.hpp> using namespace ff; typedef long fftask_t; struct firstStage: ff_node_t<fftask_t> { fftask_t *svc(fftask_t *t) { for(long i=0;i<10;++i) ff_send_out(new fftask_t(i)); return EOS; // End-Of-Stream } }; fftask_t* secondStage(fftask_t *t,ff_node*const node) { std::cout << "Hello I'm stage" << node->get_my_id() << "\n"; return t; } struct thirdStage: ff_node_t<fftask_t> { fftask_t *svc(fftask_t *t) { std::cout << "stage" << get_my_id() << " received " << *t << "\n"; delete t; return GO_ON; } }; int main() { ff_Pipe<> pipe(make_unique<firstStage>(), make_unique<ff_node_F<fftask_t> >(secondStage), make_unique<thirdStage>() ); if (pipe.run_and_wait_end()<0) error("running pipe"); return 0; }

Farm

The task-farm pattern is a stream parallel paradigm based on the replication of a purely functional computation (let's call the function F). Its parallel semantics ensures that it will process tasks such that the single task latency is close to the time needed to compute the function F sequentially, while the throughput (under certain conditions) is close to F/n where n is the number of parallel agents used to execute the farm (called Workers). The concurrent scheme of a farm is composed of three distinct parts: the Emitter (E), the pool of workers (Ws) and the Collector (C). The emitter gets farm's input tasks and distributes them to workers using a given scheduling strategy (round-robin, auto-scheduling, user-defined). The collector collects tasks from workers and sends them to the farm's output stream.

/* the third stage is transformed in a farm */ #include <ff/farm.hpp> #include <ff/pipeline.hpp> ... int main() { std::vector<std::unique_ptr<ff_node> > W; // the farm has 2 workers W.push_back( make_unique<thirdStage>()); W.push_back( make_unique<thirdStage>()); ff_Pipe<> pipe(make_unique<firstStage>(), make_unique<ff_node_F<fftask_t> >(secondStage), make_unique<ff_Farm<fftask_t> >(std::move(W)) ); if (pipe.run_and_wait_end()<0) error("running pipe"); return 0; }

ParallelFor/Map

A sequential iterative kernel with independent iterations is also known as a par- allel loop. Parallel loops may be clearly parallelized by using the map or farm patterns, but this typically requires a substantial re-factoring of the original loop code with the possibility to introduce bugs and not preserving sequential equivalence. In the FastFlow framework there are a set of data parallel patterns implemented on top of the basic FastFlow skeletons to ease the implementation of parallel loops: ParallelFor, ParallelForReduce, ParallelForPipeReduce.

Heare a very basic usage example of the ParallelFor pattern:

#include <ff/parallel_for.hpp> using namespace ff; int main() { long A[100]; ParallelFor pf; pf.parallel_for(0,100,[&A](const long i) { A[i] = i; }); ... return 0; }

Data Dependency Tasks Executor (aka MDF)

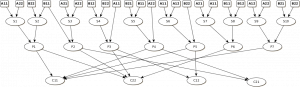

The data-flow programming model is a general approach to parallelization based upon data dependencies among a program's operations. The computations is expressed by the data-flow graph, i.e. a DAG whose nodes are instructions and arcs are pure data dependencies. If instead of simple instructions, portions of code (sets of instructions or functions) are used as graph's nodes, then it is called the macro data-flow model (MDF). It is worth noting that, the data-flow programming model is able to work both on stream of values and on a single value.

As an example, considering the Strassen's algorithm described by the following sequence of instructions operating on (sub-)matrices :

S1 = A11 + A22; S2 = B11 + B22; S3 = A21 + A22; S4 = B12 - B22; S5 = B21 - B11; S6 = A11 + A12; S7 = A21 - A11; S8 = B11 + B12; S9 = A12 - A22; S10 = B21 + B22; P1 = S1 * S2; P2 = S3 * B11; P3 = A11 * S4; P4 = A22 * S5; P5 = S6 * B22; P6 = S7 * S8; P7 = S9*S10 C11 = P1 + P4 - P5 + P7; C12 = P3 + P5; C21 = P2 + P4; C22 = P1 - P2 + P3 + P6;

the resulting DAG is sketched in the following figure:

The DAG's instructions can be executed in parallel simply respecting data dependencies.